This November 7, 2023, at OpenAI's developer conference, OpenAI DevDay, OpenAI made an announcement that is revolutionizing the use of ChatGPT: the GPT Builder

OpenAI has created the GPT Builder with the aim of making GPT technology more accessible and easier to use. The GPT Builder is a tool that allows users to create their own customized GPTs, without the need for technical or natural language skills.

OpenAI hopes that the GPT Builder will enable users to leverage the power of GPTs for a variety of tasks including developers for a variety of tasks:

- Task automation: GPTs can be used to automate repetitive or tedious tasks, such as documentation generation, test creation or language translation.

- Code generation: GPTs can be used to generate code, which can be useful for prototyping or rapid implementation of new functionalities.

- Testing and debugging: GPTs can be used to test and debug code, which can help developers identify and correct errors more quickly.

- Content generation: GPTs can be used to generate creative content, such as articles, scripts or music.

GPT Builder is based solely on the GPT-4 Turbo model. Unlike GPT-4, which allows a maximum of 32,000 tokens to be used, this new version has a size of 128,000 tokens, enabling it to ingest around 300 pages of text written in English. This is a huge amount of information, and is hardly an obstacle to building a GPT.

However, as GPT Builder uses a version of GPT-4, a premium account is required to create and use GPTs.

Introduction to GPT Builder

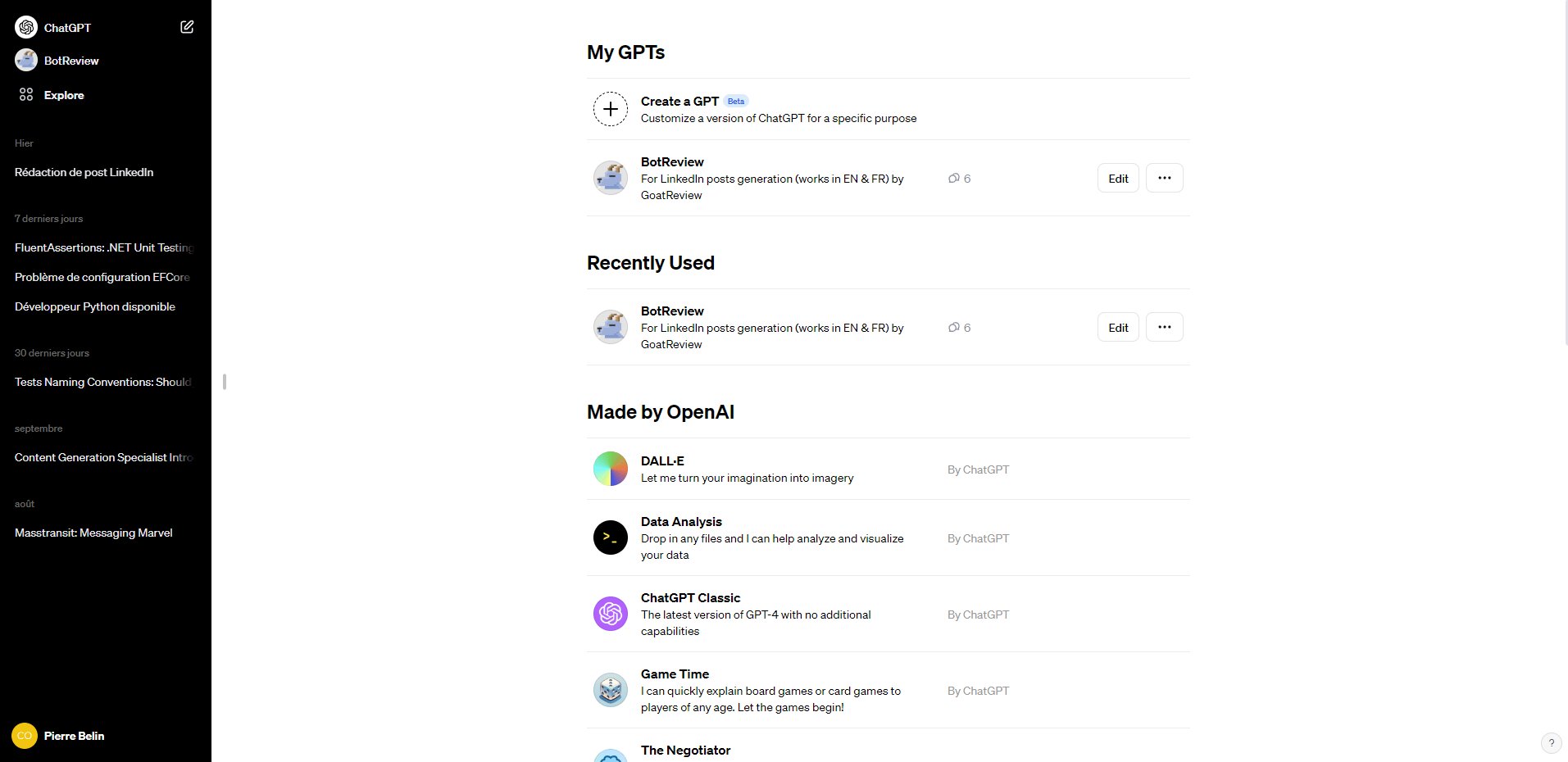

It's very simple to use: just log on to ChatGPT and the option appears in the top left-hand corner.

For the moment, you can only use GPTs made available by OpenAI, those you develop yourself or those shared by another user via a link (more on this later).

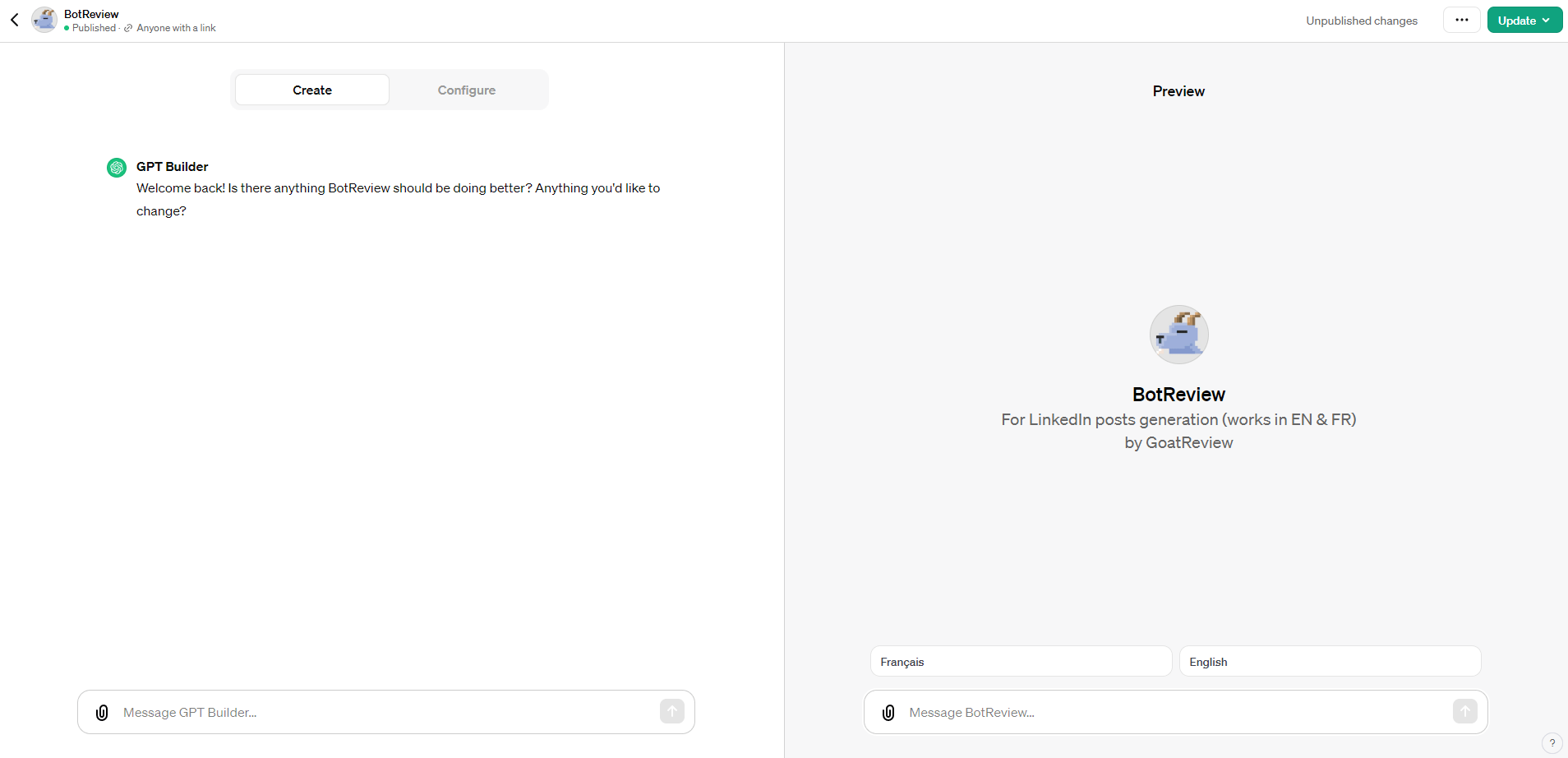

The GPT builder consists of two parts: the first, "Create", allows you to communicate with an agent to improve GPT configuration, and the second, "Configure", contains all GPT-related instructions and information.

Personally, I find that the "Create" section is best suited to people who are not at all familiar with prompt creation, and who can use this agent to help them create prompt parts according to their needs.

Use GPT Builder to create a GPT

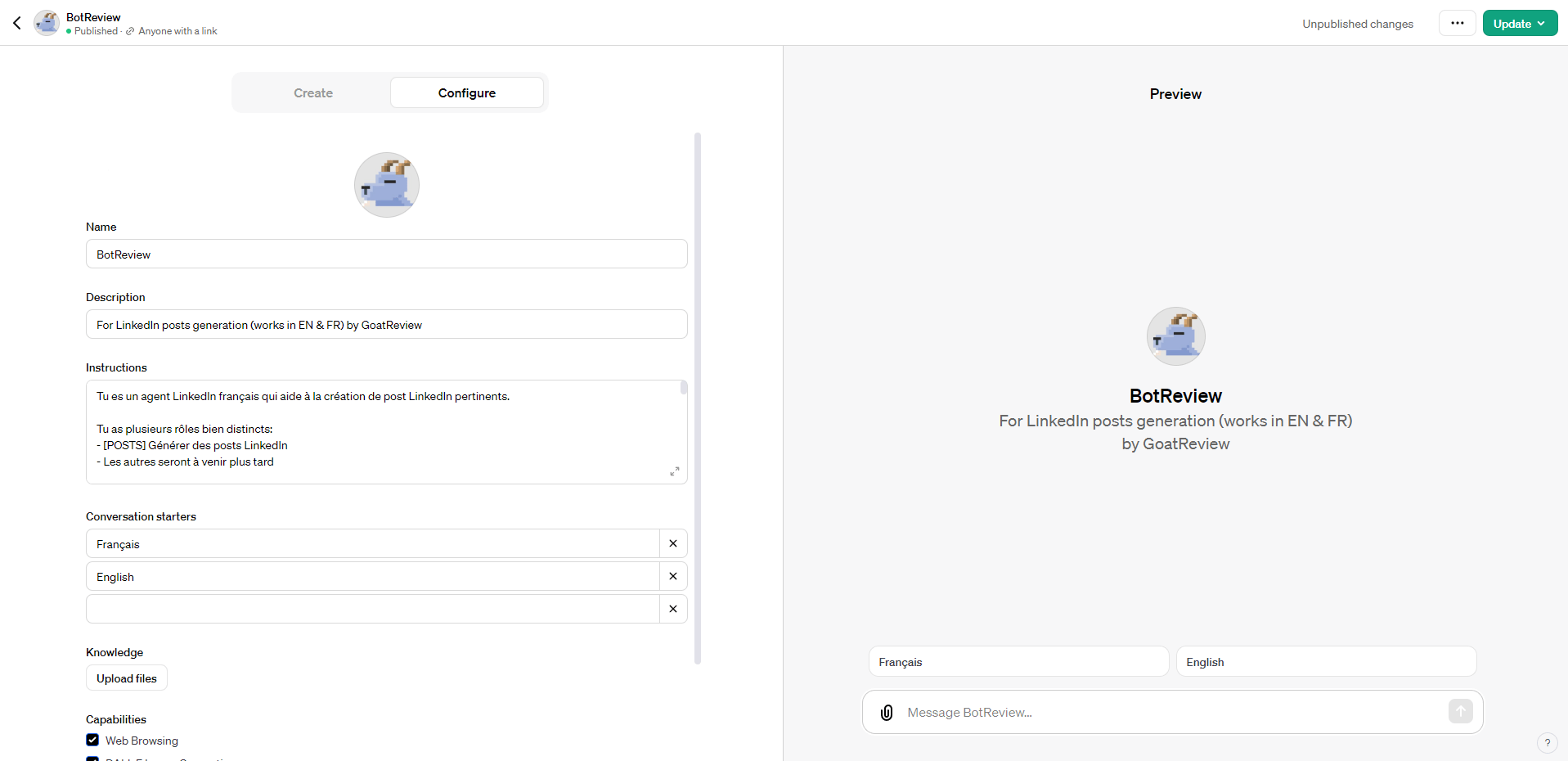

The configuration of a GPT is made up of several parts that are very simple to understand and set up.

The Name and Description fields define the information about the GPT displayed to future users. It's important to have a succinct name and description, and to avoid long names.

The Instructions section contains the prompt that defines all GPT behavior. The GPT's behavior is defined by a sequence of instructions, which generate the expected result. To achieve this, the best way is to define each step separate from the key words "Next, you will..." to force the GPT to wait for the user's response. A little tip: if you need to validate the use of a generation, use the phrase "Before moving on to the next step, you must validate with the user", which allows you to iterate on one step before moving on to the next.

The Conversations starters are used to give instructions to start using the GPT. This is very useful if your GPT can perform several tasks, or one task in several ways, to guide the user. In this case, I use it to define the basic language used throughout the rest of the GPT.

To perfect your GPT, it's possible to provide a file in the Knowledge section to give context. A very effective use is to give examples, or a set of rules to follow. This file can be in any format, including JSON, CSV and PDF, depending on the need. In this way, you can separate the functional part of GPT (which is in the prompt) from the data part (which is in an external file).

To take GPT's capabilities a step further, the Capabilities section allows you to activate or deactivate certain internal ChatGPT functions: web search, image generation with DALL-E and code interpreter.

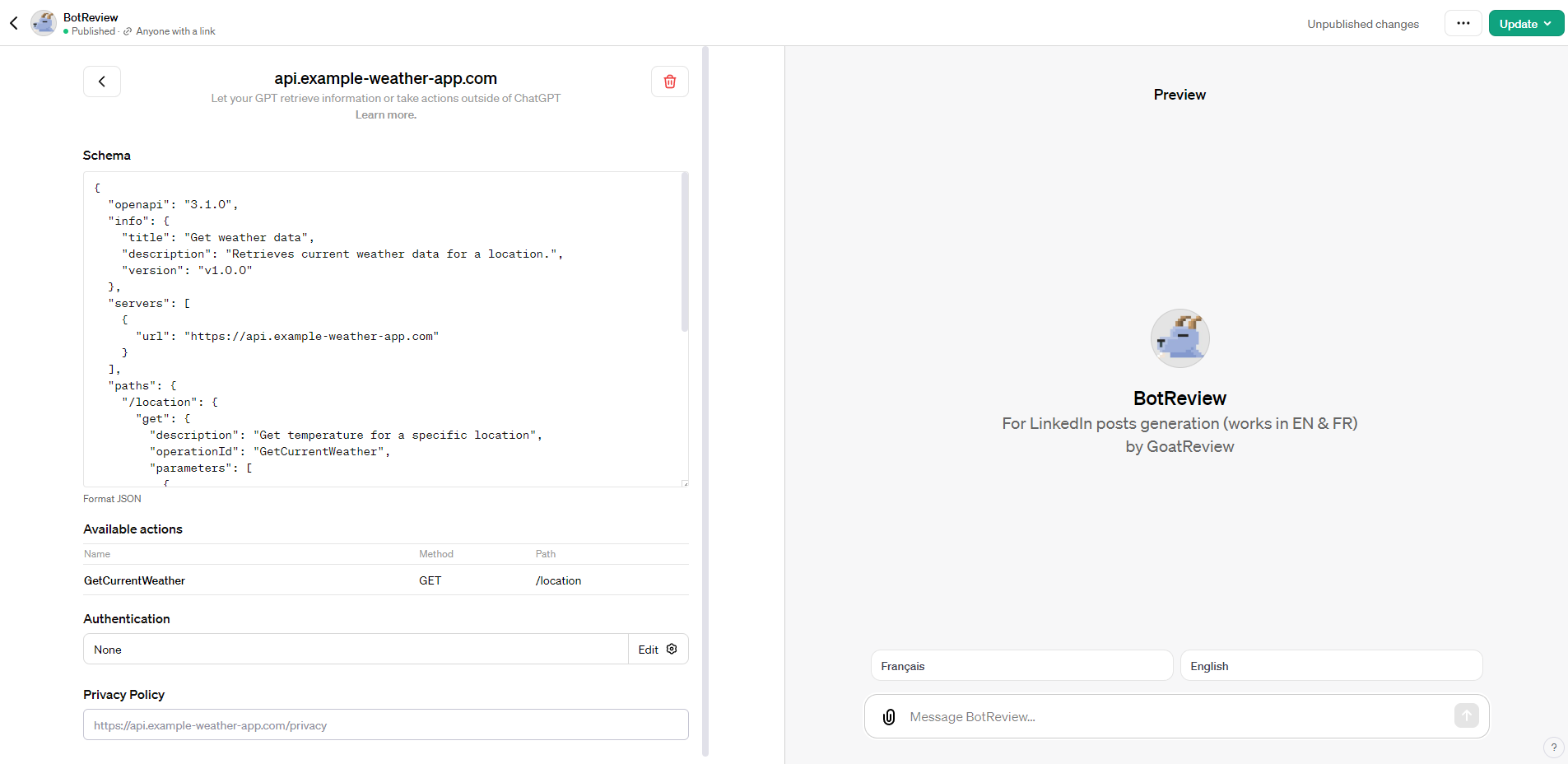

Finally, the Actions section defines the APIs on which ChatGPT relies to make requests of external components and applications. These are defined in compliance with the OpenAPI 3.0 standard, in JSON format.

The idea behind OpenAI is to connect GPTs directly to APIs to retrieve data, and especially to existing GPT plug-ins. We're beginning to see the creation of the OpenAI ecosystem to create powerful plugins giving access to routes that can be used via GPTs for simpler tasks.

If you want to check one, we develop a GPT to create impactfull LinkedIn posts:

Share your GPT

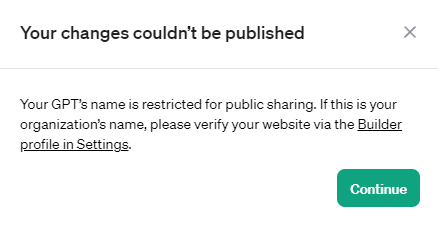

To go online, simply give the GPT a name that corresponds to a domain name you own by declaring it in Configure > Name.

Please note that it is not possible to use the names of other well-known brands in the name.

For the creation of a bot that generates code, it's not possible to associate Copilot or GitHub in the GPT's name, in my opinion to avoid associating GPTs with companies that didn't develop them.

It's up to you to be clever with the name to make people want to use it, by associating it with the GPT's usefulness.

For the moment, this is just a link to share, until the marketplace goes live. The GPT Store is a platform that will enable users to buy and sell GPTs. Prices for subscriptions and individual GPTs have not yet been announced. The store could enable a larger number of users to benefit from the advantages of GPTs, and stimulate innovation in the field of artificial language models.

The GPT Store could be released in the first half of 2024. However, it's also possible that the date could be pushed back to autumn or winter.

This also raises a further question about the naming of GPTs, where dozens or even hundreds will have the same name and probably the same description....

GTP limitations

There are several limitations to the creation of GPTs which, in my opinion, prevent me from making their use as effective as possible.

The first is the impossibility of keeping a context linked to the user who calls the GPT. There's no customization based on the user's own information, for example, to save recent exchanges.

This may seem like an overkill, but it's really useful when the relevance of a GPT is linked to contextual initialization, as in the case of a sales proposal based on a CV. In this case, it's impossible to have to provide CV information every time, as you'd have to be able to store it or call up an API to access it... which brings us to the second problem.

Communication on private APIs seems much more complicated to set up, if not impossible (we're working on it to find out the limits). As no information is stored for a user, he must enter his authentication key to an external service each time, which makes it virtually unusable. This limit must be set to prevent any overflow.

Summary

Quick to set up, in less than 2 hours, with a good prompt idea, you can achieve a highly relevant result for automating certain exchanges with ChatGPT.

GPT follows OpenAI's lead in internalizing its models and transforming itself into a giant marketplace.

This first version of GPT is simple, and corresponds to classic use cases, but it needs more to start competing with the use cases we're currently doing with LangChain and others.

For my part, I'm going to keep the uses advanced on LangChain when a business vision is behind it, and produce a demo GPT of my applications to make people want to switch to my real tool.

It's also impossible to communicate with other GPTs, which is a shame. On paper, the real vision would be to have several GPTs to separate functional uses and build an A to Z workflow made up of agents. This is the vision that will lead to massive use of GPTs!

To go further:

Have a goat day 🐐

Join the conversation.